Flow Matching and Diffusion Models

July 28, 2025 Leave a comment

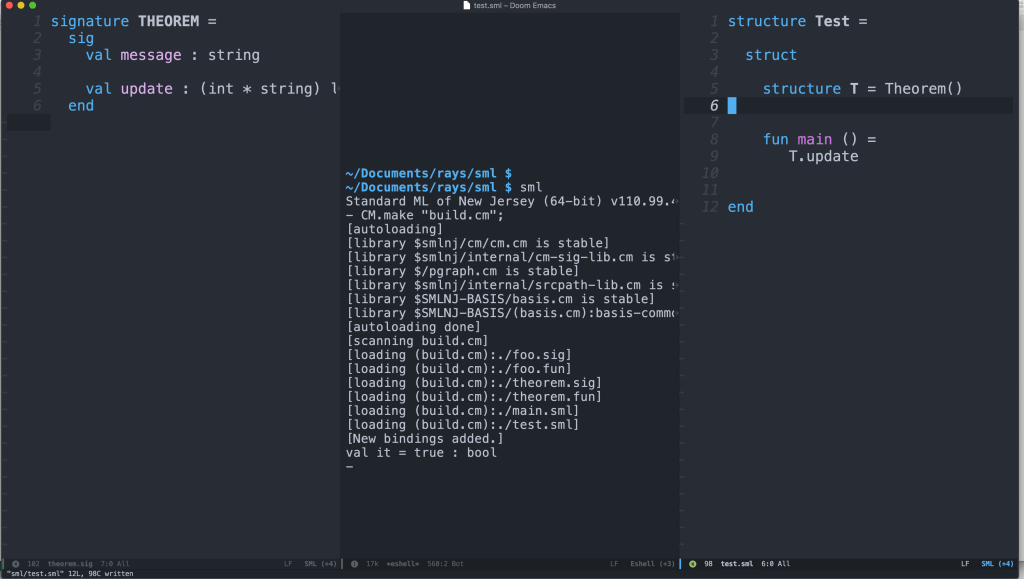

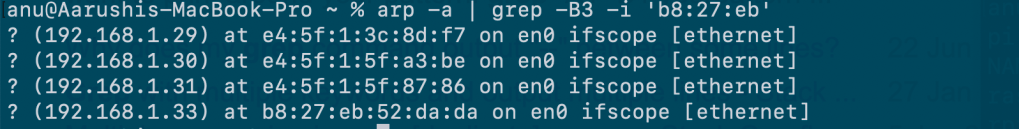

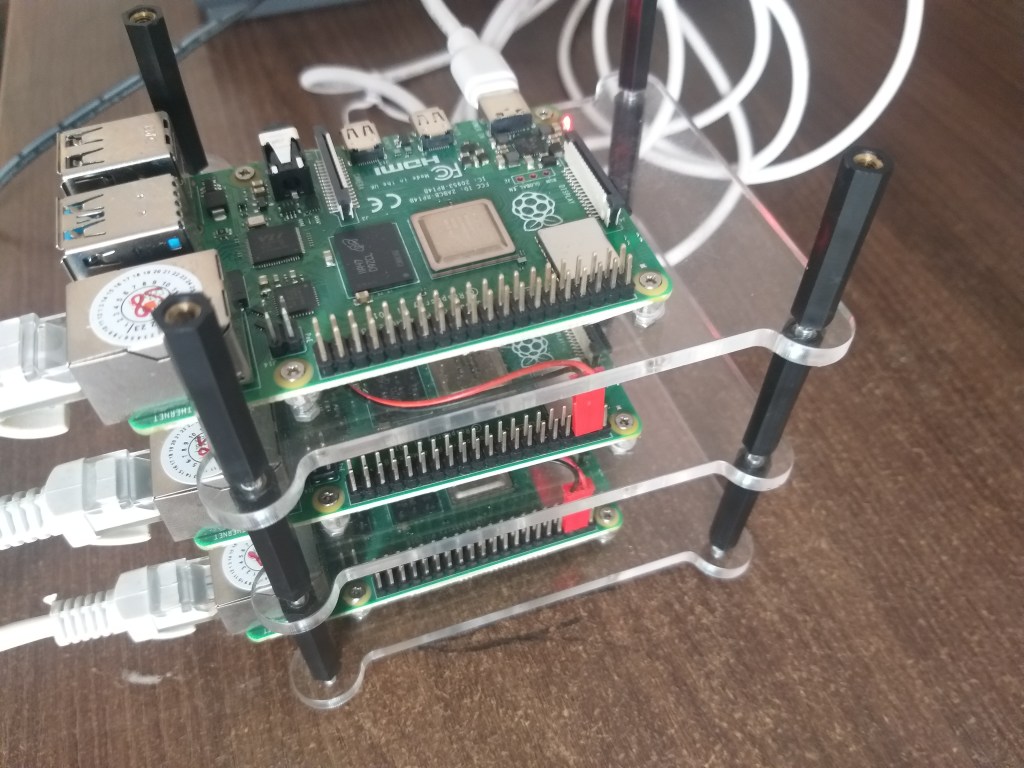

I transcribe MIT 6.S184: Flow Matching and Diffusion Models – Lecture – Generative AI with SDEs. I try to do this using Tikz diagrams too.

tl:dr

- Ordinary Differential Equations have to be studied separately.

- Stochastic Differential Equations is a separate subject.

- I believe that I need to solve the exercises by coding to understand everything reasonably well.

Lecture 1

Conditional generation means sampling the conditional data distribution.

Generative models generate samples from data distribution.

Initial Distribution :

Default is

Flow Model

Trajectory So for each time component, t , we get a Vector out.

Vector Field. (There is a space component and Time component )

Flow

means for every initial condition I want this to be a solution to my ODE.

which is the initial condition

.The time derivative of is

Neural Network.

Random Initialization

Ordinary Differential Equation ( Time Derivative)

Goal Simulate to get

This means that Flow is a collection of Trajectories that conform to the ODE

Diffusion Model

Stochastic Process

.

is a random variable

Vector Field. + Differential Coefficient

Stochastic Differential Equation

The following means that the change of in time is given by the change of direction of the Vector field

Brownian Motion

Stochastic Process and in this case the time can be infinite. We don’t have to stop at

- It has Gaussian increments. What does it mean ?

These are two arbitrary time points and t is before s, and Variance of the Gaussian Distribution varies linearly with time.

3. Independent increments. This means that

So at this stage, in order to understand the following, I need a book or another course in ODE’s

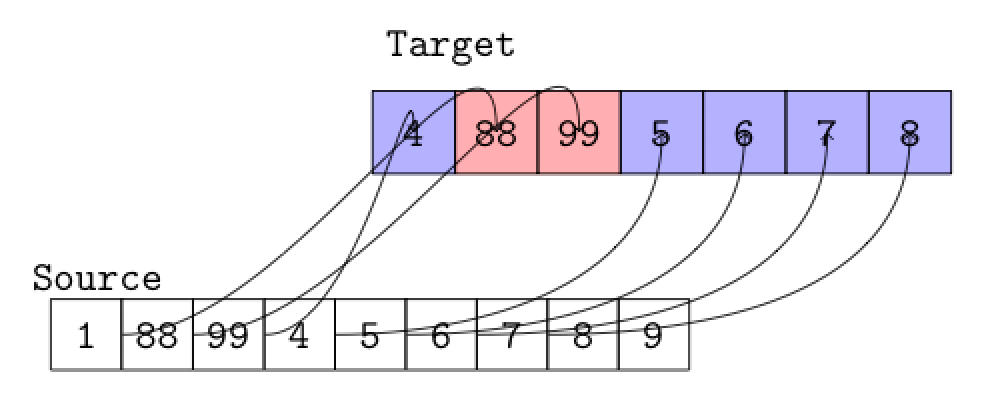

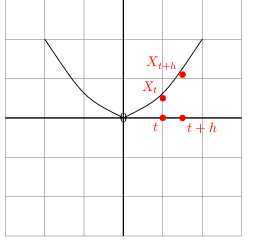

This means the trajectory with ODE is equivalent to the timestep

plus h times the direction of the vector field

plus a remainder term that can be ignored.

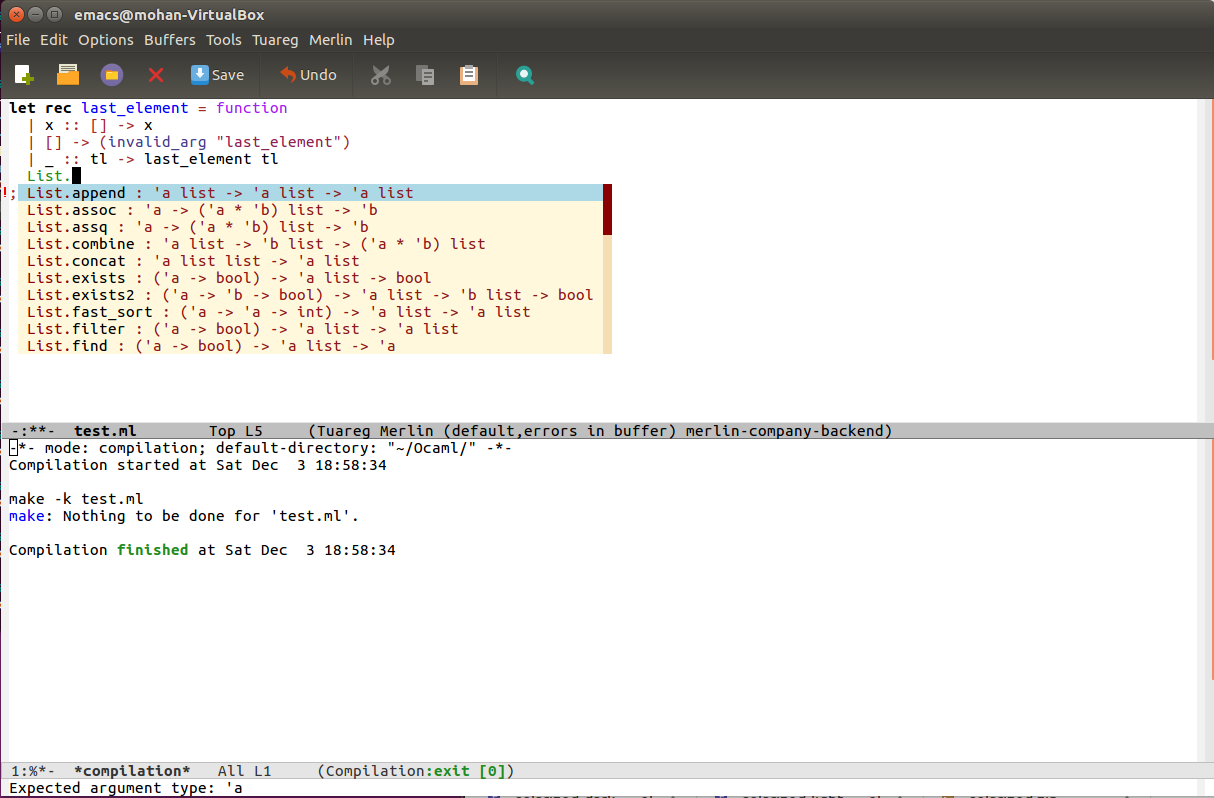

How are derivatives defined ?

This is the basic definition that I have to understand by learning Calculus.

Derivative of a trajectory

And by applying linear algebra we get the ODE shown above( this section ).

Ordinary Differential Equation to Stochastic Differential Equation

This is the recap. We found a term that doesn’t depend on the derivatives that can be specified with an error term. is the diffusion coefficient used to scale the Brownian Motion. If

is zero, it is equivalent to the original ODE.

Why do we need Brownian Motion ?

I didn’t really follow this. But the answer given was this. The Brownian Motion is equivalent to the Gaussian Distribution as far its universal value is concerned

Lecture 2

Reminder of what was covered in Lecture 1

Deriving a Training Target

Typically, we train the model by minimizing a mean-squared error

In regression or classification, the training target is the label. Here we have no label. We have to derive a training target.

The professor states that you don’t have to understand all the derivations. I anticipate some mathematics I haven’t studied earlier.

We have to make sure we understand the formulas for these.

The key terminology to remember are the following.

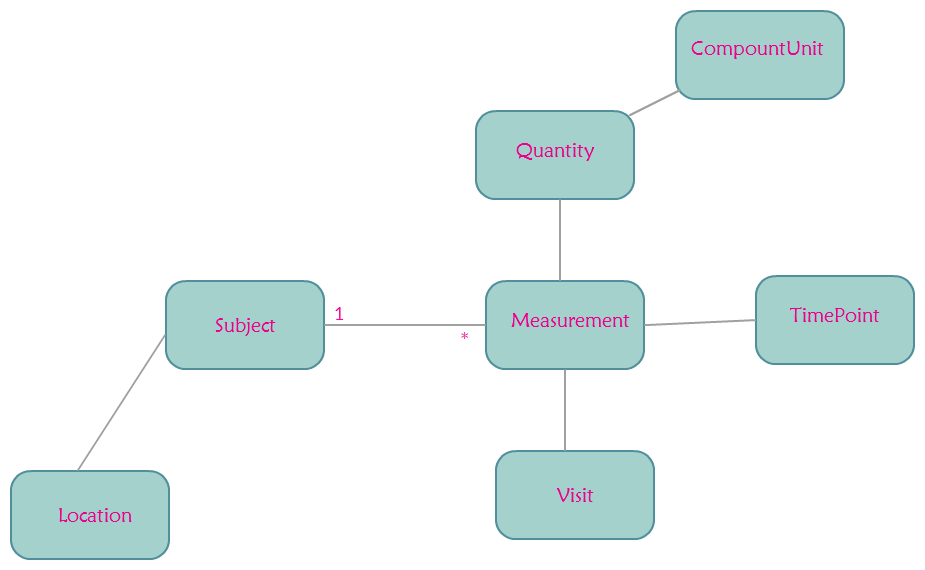

Conditional and Marginal Probability Path

This dirac distribution is not what I understand as of now. But it seems to return the same

Example : Gaussian Probability Path

}

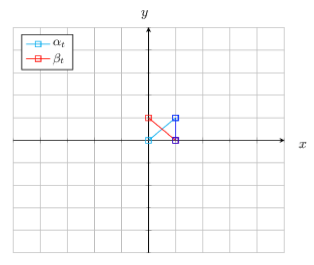

The diagram is small. But the idea is that when Time is 0, mean is 0 and variance is 1 which is and when Time is 1, mean is

and variance is 0.

The distributions with variance 1 is dirac,

Example : Marginal Probability Path

Well. This is not clear at this stage. But we take one data point(sampling) from and marginal probability path means that we forget it.

. As far as I understand the data distribution and conditional distribution leads to the marginal path.

The density formula is not clear at this stage.

All of this seems to describe that we move from noise to our distribution of the data we are dealing with.

Conditional and Marginal Vector Field

Conditional Vector field

We want to condition such that starting from initial point

then the distribution of t at every time point is given by this probablity path

We simulate the ODE like this.

Example : Conditional Gaussian Vector field

is the time deritive and is from Physics

Marginalization trick

The marginal vector field is

Not very clear at this point but the following is the application of the Bayes’ rule to look at the posterior distribution. What could have been the data point from the point set Z that gave rise to X ?

then the distribution of t at every time point is given by this marginal path

Proof of Marginalization Trick

We start with the left side of the . This equation should be

in the notes.

We can swap integrals and derivatives under certain conditions. Which conditions ?

This can be represented as the Continuity equation as applied to the Conditional Probability path.

What is the divergence operator ?

It seems to have applications in Physics. So it is not entirely clear.

But it is

Because since it is a sum we can move it outside.

Multiplying and dividing by the same quantity we get

I didn’t follow this but this satisfies the continuity equation.

Flow Matching

This is about learning the Marginal Vector field.

So we start with a neural net

Flow Matching Loss

Since the above is intractable we consider the the following.

But this loss is intractable. Look at notes above.

Conditional Flow Matching Loss

Theorem

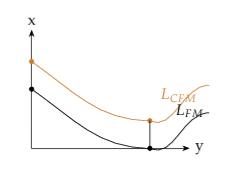

The following image roughly shows the separation by a constant.

There are two implications

1.

2.

So it holds that the neural network equals the Marginal Vector Field.

I have to conclude this part before Lecture 3 as many loose threads have to tied up. That is pending.

Lecture 3

Reminder of what was converted in Lecture 2

Conditional Prob. Path, Vector field and Score.

Marginal Prob. Path, Vector field and Score.

Flow Matching

The goal of Flow matching training is to learn the Marginal Vector field.

What is Flow Matching loss ? It is a type of regression like this.

But since this is intractable ( Why ? ) we consider the Condition Flow Matching Loss

Recall

And

Now we can sample noise from Uniform Gaussian and add that up.

Noise is distributed over a Uniform Gaussian like this

I have to determine exactly how the formula shown above is derived.

These formulas too long for the renderer. There is also a negative sign below which I couldn’t understand.

Since

The following looks like the simples forumula one could explain during the interview.

Score Matching

Score Network

Goal is to

approximate

We have to show the same thing we dealt with above. Show that the marginal loss is same as the conditional loss upto a constant.

Denoising Score Matching Loss

The proof is similar to the Flow Matching lost and Flow Matching Conditional loss shown above.

Denoising Score Matching For Gaussian Probability Path

Next we look at the Gaussian noise. If the Gaussian noise is scaled up by

it is going to have the distribution shown below.

We get the distribution

It is again similar to the Flow Matching formulas.

The instructor at this stage mentioned that the above formula predicts the noise that was injected and so it

is termed ‘Denoising’. I need to understand this better.

Conditional and marginal Score function

Conditional Score

Derivation

Substituting the formula show previously we get this after moving the gradient inside the integral.

Using the result

What is the score of the Conditional Gaussian Vector Field ?

Theorem : SDE extension trick

Then for any diffusion co-efficient

is the noise injected.

Lecture 4

A Guided CFM objective

Classifier-free Guidance

where

Fact ( Unable to understand the proper reason for the following equation )

Bayes’ Rule

This procedure is for Classifier-free Guidance.

Classifier-free guidance sampling

Lecture 4

It starts with this. What is a Score function ?

Proof

We use this rule.

Gradient log of the marginal is the Gradient of the function divided by the function itself.

Swap the derivative and integral. Which is the derivative ?

Apply the rule( shown in the first line) in reverse.

At this stage we are asked to read the notes. But the gist is that these two Gaussian examples

are similar but have slightly different weights.

So we can map them using algebra which leads to this Reparameterization

Reparameterization

This proof is in the notes. It seems that early Diffusion models learnt the Score function and transformed it into a Vector field.

Score Matching

Key Points

Learning the marginal vector field and learning the Score function are equivalent for Gaussian Probability Paths.

Denoising score matching is a simple way of learning Marginal Score functions by approximating Conditional Score Functions.

Sampling with score models is achieved by adding the desired amount of noise and applying correction to the vector field.