Write logic using loop using TensorFlow

March 7, 2021 2 Comments

The programming paradigm one adopts when coding TensorFlow is not what I use normally. One has to learn a few tricks to get used to it. When you also consider the eager mode introduced in TensorFlow 2 it can be hard.

Recently I answered a question on Stackoverflow. The question was about writing a loop to take advantage of the GPU.My desktop has a old NVIDIA GPU and my Mac has a AMD GPU. So neither was useful to test this code. But I managed to rewrite the loop using TensorFlow 2.

The original code is this.

def multivariate_data(dataset, target, start_index, end_index, history_size,

target_size, step, single_step=False):

data = []

labels = []

start_index = start_index + history_size

if end_index is None:

end_index = len(dataset) - target_size

#print(history_size)

for i in range(start_index, end_index):

indices = range(i-history_size, i, step)

data.append(dataset[indices])

if single_step:

labels.append(target[i+target_size])

else:

labels.append(target[i:i+target_size])

return np.array(data), np.array(labels)

I will add a diagram or two with some explanation later on. This type of diagram is drawn using /Library/TeX/texbin/pdflatex and my Tikz editor. I have a plan to generate a PDF from the text and diagrams using tools later.

This creates a empty 1-D tensor and fills the values in it based on conditions in the loop. It is as simple as it gets but can be used to understand how to operate loops.

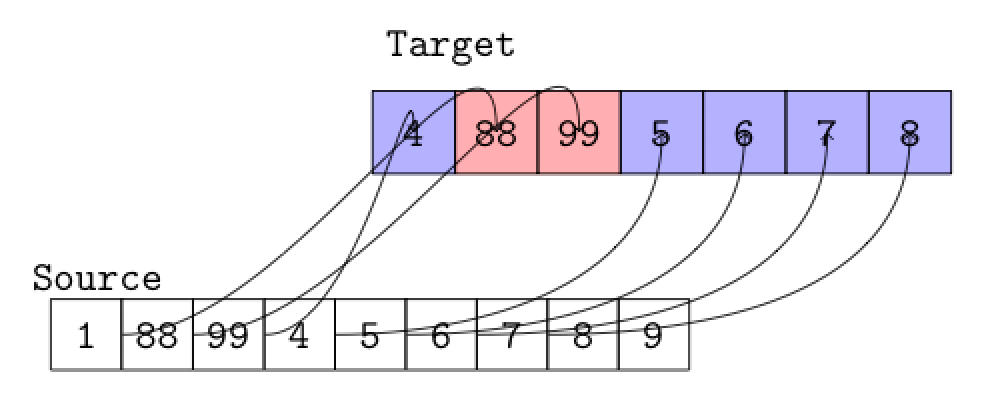

If you notice it is also possible to pick ranges from the source and move to the target like this. This line of code begs for a diagram as higher the rank of a tensor the more complicated it is to visualize what is happening. Remember this is a 1-D or Rank 0 tensor.

self._data = tf.concat([self._data,[tf.gather(dataset, i)]],0)

The final code is this.

import tensorflow as tf

class MultiVariate():

def __init__(self):

self._data = None

self._labels = None

def multivariate_data(self,

dataset,

start_index,

end_index,

history_size,

target_size,

single_step=False):

start_index = start_index + history_size

print("end_index ", end_index)

print("start_index ", start_index)

if self._data is None:

self._data = tf.cast(tf.Variable(tf.reshape((), (0,))),dtype=tf.int32)

if self._labels is None:

self._labels = tf.cast(tf.Variable(tf.reshape((), (0,))),dtype=tf.int32)

if end_index is None:

end_index = len(dataset) - target_size

def cond(i, j):

return tf.less(i, j)

def body(i, j):

#A range of values are gathered

self._data = tf.concat([self._data,[tf.gather(dataset, i)]],0)

if ( i == start_index ): #Showing how A range of values are gathered and appended

self._data = tf.concat([self._data,tf.gather(dataset, tf.range(1, 3, 1))],0)

return tf.add( i , 1 ), j

_,_ = tf.while_loop(cond, body, [start_index,end_index],shape_invariants=[start_index.get_shape(), end_index.get_shape()])

return self._data

mv = MultiVariate()

d = mv.multivariate_data(

tf.constant([1,88,99,4,5,6,7,8,9]),

tf.constant(2),

tf.constant(8),

tf.constant(1),

tf.constant(2),

tf.constant(2))

print("print ",d)

I’m curious about the utilization of ‘cond’ and ‘body’ functions in tf.while_loop(). They seem to serve as foundational elements for the loop. Is my understanding correct that the ‘cond’ function effectively serves as the condition for the loop to occur, while ‘body’ articulates the operation that you want to conduct within the loop?

Yes. It is a simple Tensorflow loop.