Custom Code to create Ragged Tensor

May 28, 2021 Leave a comment

I have been preparing to write a longer version about Tensorflow with Tikz diagrams. Eventually there will be sufficient number of pages to write a short book. And I have been looking for tools to generate the book’s text, Tikz diagrams and the code as a PDF book.

I know that descriptions are important too and just colorful diagrams won’t cut it. But I am trying. I will add

more descriptions and diagrams to this same post till I am satisfied.

A

RaggedTensoris a tensor with one or more ragged dimensions, which are dimensions whose slices may have different lengths.

tf.RaggedTensor is part of the TensorFlow library. This code attempts to do the same.

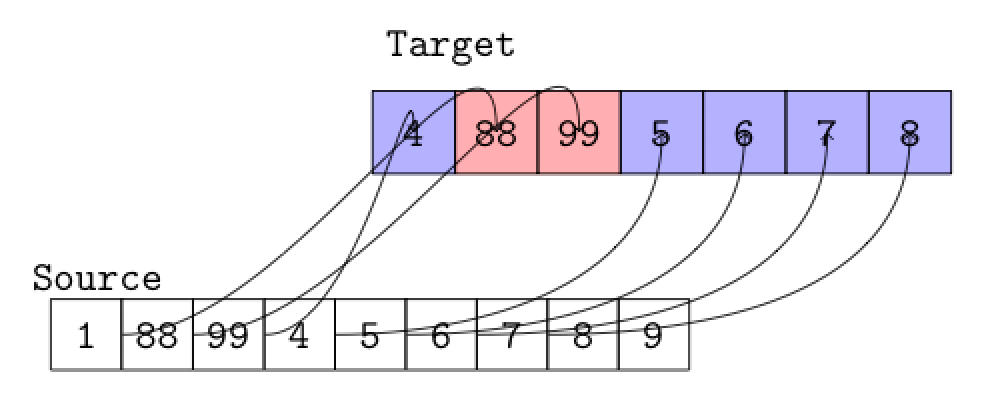

We start with the source [3, 1, 4, 2, 5, 9, 2] and a template showing the row position like this [0, 0, 0, 0, 1, 1, 2].

Our map is like this.

The longest repeating value in the template is 0. So we will store the first 4 values(3 ,1, 4, 2) from the source in row 1. Row 2 has values 5 and 9. Since we need 4 values we fill -999 in the next two positions in row 2. Row 3 now has only value 2. The other 3 positions are filled with -999.

There are many ways to code this but if you start with

elements, index, count = tf.unique_with_counts([0, 0, 0, 0, 1, 1, 2])

print('Elements ',elements)

which gives all the data you need then the following code fills up the ‘ragged’ tensor with the ‘filler’

Note : I have hard-coded

if( slice.shape[0] < 4):this. This is the length of the longest repeating value but you can obtain this fromtf.unique_with_countsand pass it. I also don’t account for missing values –[0, 0, 0, 0, 2]. Butelementsin the code above gives you what is present. So you could add a row of ‘fillers’ using a simple loop when you find a value missing.

import tensorflow as tf

fill_value = tf.constant([-999]) # value to insert

elements, index, count = tf.unique_with_counts([0, 0, 0, 0, 1, 1, 2])

print('Elements ',elements)

values = [3, 1, 4, 1, 5, 9, 2]

ta = tf.TensorArray(dtype=tf.int32,size=1, dynamic_size=True,clear_after_read=False)

def fill_values(slice,i):

slices = slice

if( slice.shape[0] < 4):

for j in range( 4 - slice.shape[0] ):

slices = tf.concat([slices,fill_value],0)

tf.print('Fill ',slices)

return ta.write(i,slices)

def slices( begin, c, i, filler ):

slice = tf.slice( values,

begin=[ begin ],

size=[ c[i] ])

begin = begin + c[i]

tf.print('Slice' , slice)

ta = fill_values(slice,i)

print('TensorArray ', ta.stack())

# Note: The output of this function should be used.

# If it is not, a warning will be logged or an error may be raised.

# To mark the output as used, call its .mark_used() method.

return [begin , c, tf.add(i, 1), filler]

def condition( begin, c, i, _ ):

return tf.less(i, tf.size(c))

i = tf.constant(0)

filler = tf.constant(-999)

r = tf.while_loop( condition,slices,[0, count, i, filler ])

print('TensorArray ', ta.stack())