Spectral Clustering

October 27, 2014 Leave a comment

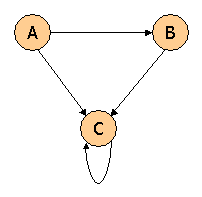

2 ----6 / \ | 1 4 | \ / \ | 3 5

The goal is to find two clusters in this graph using Spectral Clustering on the Laplacian matrix. Compute the Laplacian of this graph. Then compute the second eigen vector of the Laplacian (the one corresponding to the second smallest eigenvalue).

A = matrix(c(0,1,1,0,0,0,

1,0,0,1,0,1,

1,0,0,1,0,0,

0,1,1,0,1,0,

0,0,0,1,0,1,

0,1,0,0,1,0),nrow=6,ncol=6,byrow=TRUE)

colnames(A) <- c("1","2","3","4","5","6")

rownames(A) <- c("1","2","3","4","5","6")

B = matrix(c(2,0,0,0,0,0,

0,3,0,0,0,0,

0,0,2,0,0,0,

0,0,0,3,0,0,

0,0,0,0,2,0,

0,0,0,0,0,2),nrow=6,ncol=6,byrow=TRUE)

L = B - A

print(L)

e <- eigen(L)

R can be used to get the eigen values and vectors. But Wolfram gives these values.

Eigenvalues

Eigenvectors

The second highest eigen value is

So the 5th row of the eigen vector matrix is

This means that the

1st and 3rd nodes are part of one cluster and 5th and 6th nodes are part of the other cluster. 2nd and 3rd nodes can be part of either cluster.